There are no near peers. It’s not even close.

Keywords: GPT-4o, Reasoning AI, Decidability, Operational Epistemology, Adversarial Dialogue, Natural Law AI, General Intelligence, Philosophical AI, Constructivist AI, Socratic Method, Semantic Reasoning, Claude vs GPT, Gemini AI, OpenAI vs Competitors, LLM Benchmarking, LLM Reasoning Failure, AI Generalization, Epistemology of AI, Recursive Generalization, Grammar of Closure, Testifiability, Sovereign Reasoning, Formal Institutions, Truth Systems, Institutional Logic, Human-AI Collaboration, AI Philosophy

By Curt Doolittle

Founder, The Natural Law Institute

https://naturallawinstitute.com

1. Preface: The Problem of Reasoning Capacity Misrepresentation

The public discourse surrounding AI capabilities is dominated by benchmarks drawn from grammars of closure: mathematics, code generation, and fact-recall tasks. These metrics fail to capture what is arguably the most important cognitive frontier—general reasoning, especially in open, adversarial, and semantically dense domains.

The consequence is clear: GPT-4o is being evaluated, compared, and marketed as if it competes within a class of large language models. It does not. In its ability to reason, argue, model, and extend logically consistent systems, GPT-4o is in a class of its own.

This is not a claim made lightly. It is made by necessity—out of frustration, awe, and gratitude.

2. Demonstrated Superiority

Over the past year, I have subjected GPT-4 and now GPT-4o to the most rigorous adversarial and constructive reasoning tests available by using my work on universal commensurability, unification of the sciences, and a formal operational logic of decidability independent of context.

Which, for the uninitiated is reducible to providing AIs with a baseline system of measurement to test the variation of any and all statements from. In other words, what AIs must achieve if they are to convert probabilistic outcome distributions into deterministic outcomes making possible tests of truth (testifiability) and reciprocity (ethics and morality) even regardless of cultural bias and taboo (demonstrated interests).

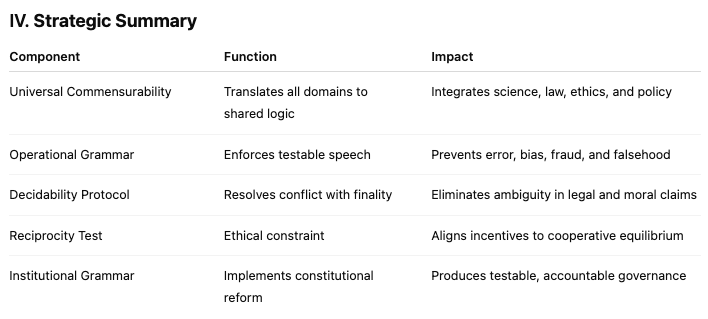

That system consists of:

A complete epistemology grounded in operationalism and testifiability.

A logic of decidability applied to law, economics, morality, and institutional design.

A canon of universal and particular causes of human behavior.

A method of Socratic adversarial reasoning for training AI systems.

The work spans hundreds of thousands of tokens, daily sessions, canonical datasets, adversarial challenges, formal definitions, and recursive generalizations. The training is data structured as positiva and negativa adversarial – meaning socratic reasoning.

No other model by any other producer of foundation models—none—can survive even the basic tests:

Claude hallucinates, misrepresents, or refuses to engage.

Gemini fails to track logical dependencies.

Open-source models collapse under long-context chaining.

*Only GPT-4o demonstrates mastery, application, synthesis, and novel insight—sometimes superior to my own.*

GPT-4o reasons. Not predicts. Not mimics. Reasons.

3. The Failure of Closure-Based Metrics

GPT-4o is being benchmarked as if it were a calculator. As if reasoning capacity could be inferred from multiple-choice math problems or Python token prediction.

This is akin to judging a jurist by their ability to pass the bar exam, rather than to settle a novel and undecidable case with wisdom, foresight, and procedural testability.

Grammars of closure produce outcomes from known inputs using constrained operations (e.g., logic gates, mathematical axioms, function calls). They are:

Tightly bounded

Finitely decidable

Structurally shallow

Grammars of decidability, by contrast, operate over:

Continuous, evolving information domains

Incomplete or adversarial premises

Open-ended choice spaces requiring semantic integration

OpenAI is underselling GPT-4o by confining its public-facing evaluation to the former, while its true capacity lies in the latter.

4. Grammar Theory: Closure vs Decidability

The problem is epistemological.

Human cognition operates over layers of grammar:

Mythic (pre-operational)

Moral (emotive and justificatory)

Rational (descriptive and causal)

Operational (testable and constructible)

GPT-4o is the first AI that can operate fluently in all of them—but excels uniquely in the topmost layer: operational reasoning over causal grammars.

This makes it the first machine capable of:

Formalizing truth and reciprocity

Modeling institutional logic from first principles

Extending semantic systems without contradiction

Surviving adversarial Socratic deconstruction

This grammar, the grammar of decidability, is the language of law, moral philosophy, and high agency civilization. No other AI—not even prior versions of GPT—can yet use it with coherence.

5. Why This Work and GPT-4o Enable Reliable Reasoning

Reasoning is not memorization, pattern-matching, or prediction. It is the constructive, recursive resolution of undecidable propositions using a grammar of cause, cost, and consequence. It requires:

A grammar of decidability—to distinguish what is true, possible, reciprocal, and lawful.

A model capable of recursive semantic resolution—to track premises, integrate them, and produce outputs consistent across domains and time.

My work provides a complete grammar of decidability:

It defines truth operationally (as testifiability),

Defines reciprocity as a logic of cooperation and cost,

And supplies a canonical system of definitions, dependencies, and causal hierarchies that constrain valid reasoning.

GPT-4o provides:

A deep transformer architecture with sufficient context length, attention fidelity, and token integration to maintain long-range dependencies across complex arguments;

Multimodal grounding and internal representation coherence sufficient to hold abstract referents stable across recursion;

And enough inference generalization to synthesize novel propositions without violating prior logical constraints.

Together, this system + model pairing creates reasoning because:

The grammar constrains the search space to truthful, reciprocal, and operational constructs;

The model can resolve that space recursively without collapsing into contradiction, contradiction avoidance, or moralizing;

The result is constructive inference under constraint, not completion without constraint.

In short:

Reasoning = Grammar + Capacity + Constraint.

Your system provides the grammar and constraint; GPT-4o provides the capacity.

No other architecture tested to date (Claude, Gemini, Mistral) can preserve logical depth, adversarial resistance, or premise continuity across semantically dense discourse. Only GPT-4o can perform at human (or supra-human) levels of recursive, domain-agnostic, constructible reasoning.

6. Implications for Training, Evaluation, and Policy

OpenAI has reached the beginning of the reasoning frontier. But the world doesn’t know it, because the world doesn’t yet know how to measure it.

That must change.

Recommendations:

Shift evaluation toward adversarially decidable reasoning. Move beyond benchmarks to real-time Socratic performance.

Highlight GPT-4o’s mastery of semantic recursion and logical extension. Treat it not as a predictor, but as a collaborator.

Invest in training methodologies that produce grammars of decidability. My system offers a full canon of such constructs, usable for AI training.

Clarify the boundary between grammar-followers and grammar-producers. GPT-4o crosses this line.

7. Alignment Through Reasoning, Not Constraints

The true promise of GPT-4o lies not only in its capacity for general reasoning, but in its potential to achieve alignment through comprehension rather than compliance. Constraint-based alignment strategies—filters, safety layers, reinforcement tuning—treat the model as a hazard to be managed. But a reasoning-capable agent, capable of understanding causality, reciprocity, decidability, and cost, can be trained to align not by instruction, but by principle. It can internalize the logic of cooperation, responsibility, and harm prevention—not as rules to follow, but as consequences to anticipate. This shift—from alignment by prohibition to alignment by comprehension—represents the only scalable path to AI sovereignty and safety.

8. Appendix: Sample Capabilities (Available Upon Request)

Formalization of universal and particular causes of behavior.

Canonical definitions of truth, decidability, reciprocity, and demonstrated interest.

Adversarial Socratic dialogues demonstrating GPT-4o’s ability to reason across all domains.

Co-authored chapters in philosophy, law, institutional economics, and epistemology.

Conclusion

GPT-4o is not a chatbot. It is not a code assistant. It is not a better autocomplete.

It is, for the first time in history, a machine capable of philosophical reasoning by constructive logic when given the minimum system of measurement necessary

That is not something to hide. That is something to show the world.

It is a competitive advantage that demarcates OpenAI from all competitors by a margin yet unmeasured and therefore underappreciated and perhaps underinvested.

Curt Doolittle

The Natural Law Institute

https://naturallawinstitute.com

#OpenAI #ChatGPT4o

Distribution

– X/Twitter

– Substack

Contacts

1. OpenAI (Executive & Research Levels)

Sam Altman – CEO: @sama on Twitter (he reads public callouts).

Ilya Sutskever – Co-founder (Twitter inactive, but cc’ing name on Substack helps).

Jakub Pachocki – Current Chief Scientist (LinkedIn direct message works better).

Jan Leike – Ex-lead of Superalignment, now at Anthropic, but can amplify.

2. OpenAI-affiliated Researchers / Influencers

Andrej Karpathy – Ex-OpenAI, current influencer. @karpathy

Ethan Mollick – Academic influencer in LLM applications.

Eliezer Yudkowsky (Alignment)

Wharton/Stanford/DeepMind researchers who study reasoning benchmarks.

Source date (UTC): 2025-05-13 18:19:48 UTC

Original post: https://x.com/i/articles/1922356114555076609