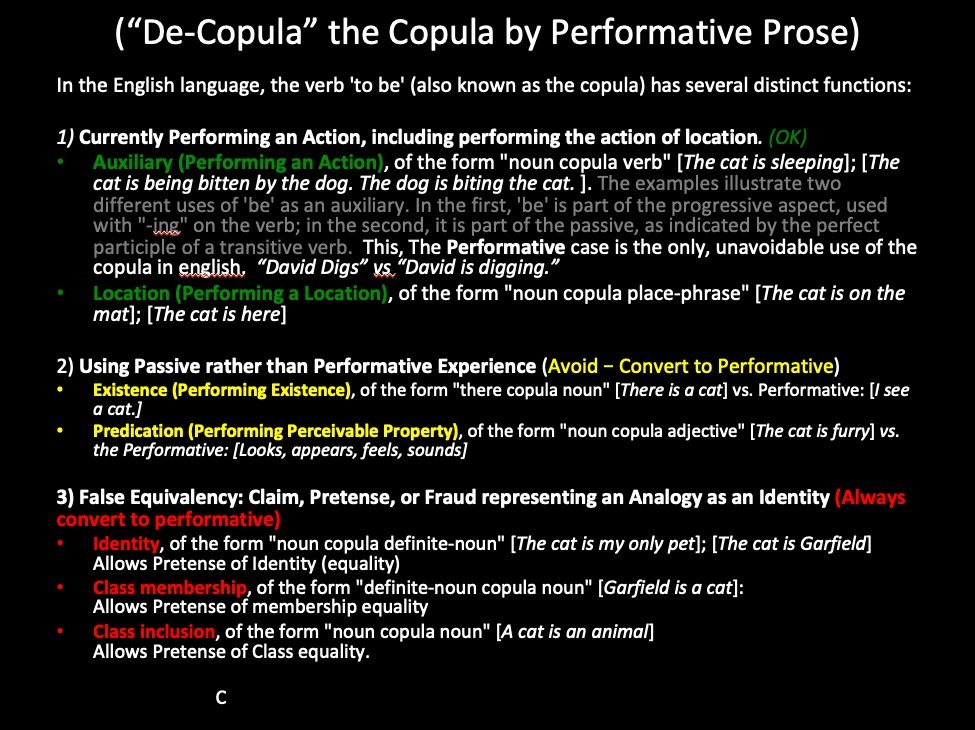

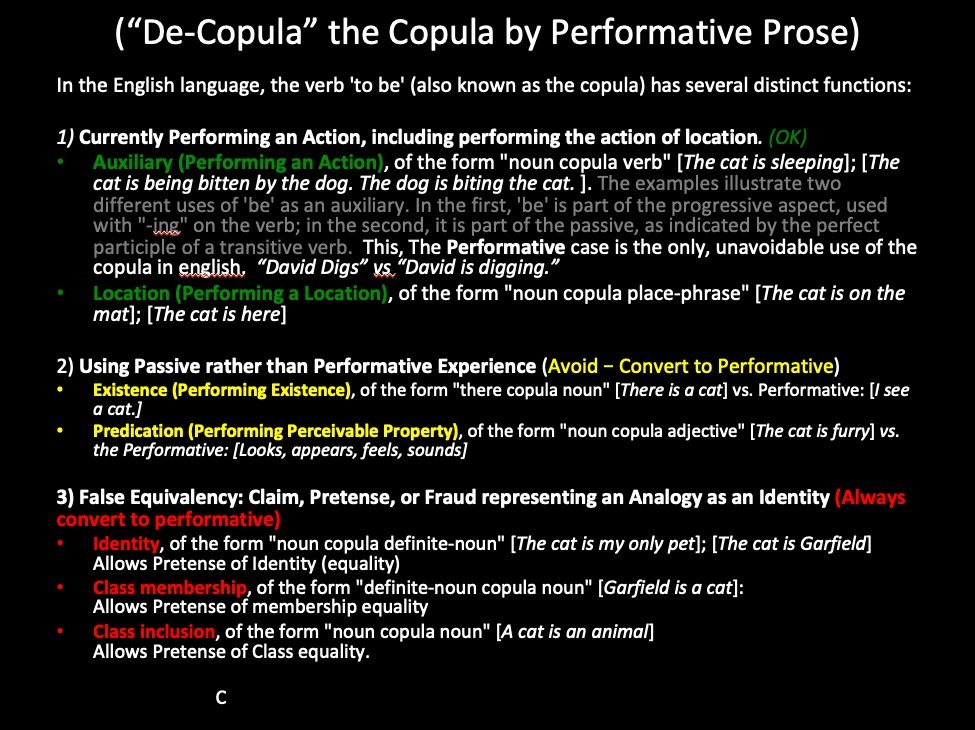

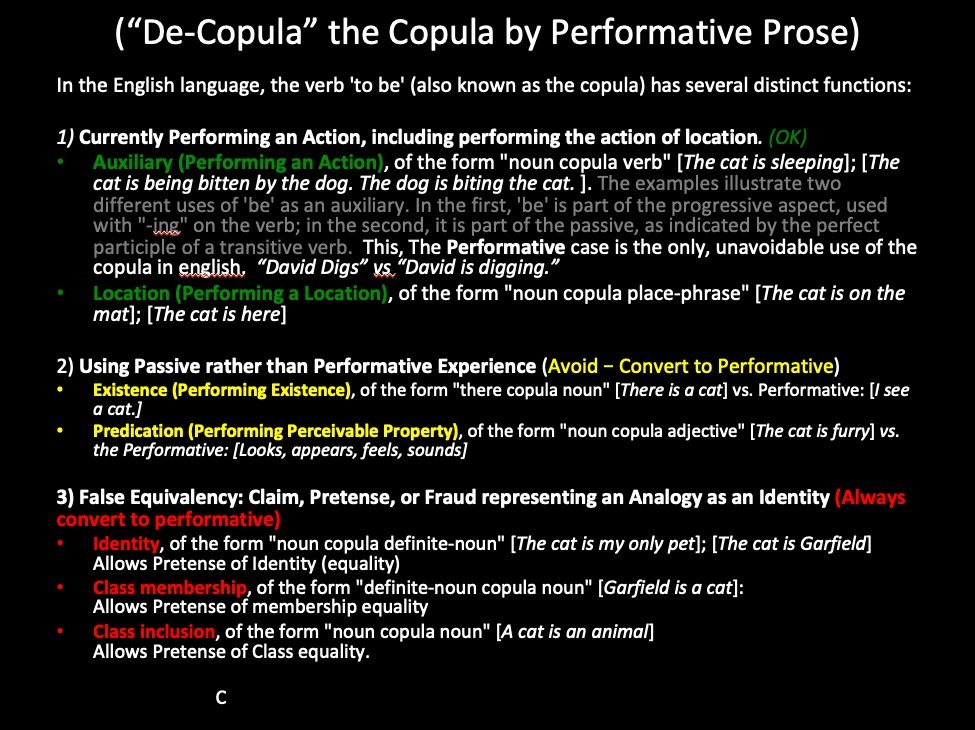

Q: For Followers. Is this slide clearer now? https://t.co/VKsT7ONBrf

Source date (UTC): 2021-04-23 15:16:23 UTC

Original post: https://twitter.com/i/web/status/1385613528816619521

Q: For Followers. Is this slide clearer now? https://t.co/VKsT7ONBrf

Source date (UTC): 2021-04-23 15:16:23 UTC

Original post: https://twitter.com/i/web/status/1385613528816619521

@SicSemperTyrannis_1776 That’s pretty good. Yes. “What can you write with this combination of types. The continuous combination of operations like the recombinate potential of language, or the potential of n-dimensions in math, or the combination of applied chemistry, or biochemistry, or genetics is infinite. But it has to be constructable.

Source date (UTC): 2021-04-22 01:49:23 UTC

Original post: https://gab.com/curtd/posts/106106464735657239

COMMENT ONT HE PRESENT CONDITION OF AI (and the possibility of the next AI winter … or not )

https://propertarianinstitute.com/2021/04/19/comment-on-the-present-condition-of-ai/

Source date (UTC): 2021-04-19 20:05:57 UTC

Original post: https://twitter.com/i/web/status/1384236850647162884

it’s fking impossible. I’ve added a js modification to twitter, so that it stops the pink coloring of long texts. I can take a snapshot and post the image of the text. But even so, still too short. So is gab. It really does take 4K to make a full argument.

Source date (UTC): 2021-04-18 20:02:10 UTC

Original post: https://twitter.com/i/web/status/1383873509030383618

Reply addressees: @LukeWeinhagen @ThruTheHayes @juniorwolf @G_Eats_Midwits

Replying to: https://twitter.com/i/web/status/1383871123352297479

@Hail Gab doesn’t allow chaining of posts like twitter, nor does it have a sufficient character limit like facebook. So we are stuck with starting in a post and adding to it with comments.

Source date (UTC): 2021-04-17 20:50:02 UTC

Original post: https://gab.com/curtd/posts/106082638419285760

So the only means of defeating competitors (or enemies) with technology is to deprive them of the economic returns of any given technology.

This is what I hope to achieve with Runcible. https://twitter.com/curtdoolittle/status/1381650823797739523

Source date (UTC): 2021-04-12 16:55:33 UTC

Original post: https://twitter.com/i/web/status/1381652220433862656

https://twitter.com/curtdoolittle/status/1381650823797739523

Had to add the text to the title of the tweet for searching.

The content of the slide doesn’t have the self reference any longer. 😉

Source date (UTC): 2021-04-11 17:30:22 UTC

Original post: https://twitter.com/i/web/status/1381298592967712769

Reply addressees: @WorMartiN

Replying to: https://twitter.com/i/web/status/1381291164557586442

Yeah, I think that the future (at least my product includes it) is more like Scrivener than Word or any alternative. Lots of reasons.

Source date (UTC): 2021-04-07 18:31:21 UTC

Original post: https://twitter.com/i/web/status/1379864389138931715

Reply addressees: @skyfire1201

Replying to: https://twitter.com/i/web/status/1379861053589233670